You typed a prompt, the AI returned an image (or song, or article), and now there's a useful but awkward question staring back at you: who owns AI-generated content? Can you sell it? Can someone else copy it freely? Does it matter what country you're in, or what platform you used?

The honest answer is: it depends, more than almost any other digital ownership question. Copyright treats human authorship as the starting point, and AI complicates that starting point. As of 2026, no major jurisdiction has fully settled the rules, and the answers diverge sharply across the US, UK, EU, China, and Japan. This guide walks through what the major regions currently say, what platform terms typically grant you on top of (or instead of) copyright, and what creators should actually do in practice.

The short answer

Three layers of "ownership" interact whenever you generate something with AI:

- Copyright — the legal monopoly to copy, distribute, and adapt a work. Most jurisdictions still require a human author, with significant exceptions.

- Platform terms of service — what the AI tool's contract grants you. Usually a license to use the output commercially, but the details vary wildly.

- Underlying training-data rights — whether the model itself was lawfully trained, which is the subject of dozens of pending lawsuits worldwide and can affect downstream use.

You can have full platform rights to use an output and still have no copyright over it — meaning anyone else who finds it can copy it freely. Conversely, you can hold copyright over the human-authored arrangement of AI elements while the AI elements themselves remain unprotected. The nuance matters.

Why this is so tangled: the human-authorship rule

Most copyright systems trace back to international treaties (the Berne Convention) that anchor protection in human creativity. Courts and copyright offices in many jurisdictions have read this to mean that purely AI-generated outputs — where a human only typed a prompt — don't qualify for copyright at all, because there's no human author of the resulting expression. Other jurisdictions disagree, either by statute or by recent court rulings. Below is the country-by-country picture.

Jurisdiction-by-jurisdiction breakdown

United States

The US Copyright Office (USCO) has been the loudest voice on this question. Its consistent position, reaffirmed in its multi-part Copyright and Artificial Intelligence report (the second part of which was published in January 2025), is: purely AI-generated outputs are not copyrightable. Copyright requires human authorship.

What is protectable in the US:

- Human-authored elements you contribute (text you wrote, edits you made, the selection and arrangement of AI elements into a larger work).

- The compilation or arrangement of AI-generated material into a creative whole (as in Zarya of the Dawn, where the comic's text and image arrangement were registered, but the individual AI-generated images were not).

What's not protectable:

- An image, song, or article generated from a prompt alone — even an elaborate one. The Copyright Office has held that prompts function more like instructions than authorship.

- Works the USCO has explicitly refused to register, including A Recent Entrance to Paradise (Thaler) and the AI-generated image components of Théâtre D'opéra Spatial.

The DC Circuit Court of Appeals affirmed the USCO's denial in Thaler v. Perlmutter in 2025, holding that the Copyright Act's "author" requires a human. This is now binding precedent in the DC Circuit and persuasive nationwide.

United Kingdom

The UK is the global outlier. Section 9(3) of the Copyright, Designs and Patents Act 1988 explicitly contemplates "computer-generated works" — works generated by a computer "in circumstances such that there is no human author of the work" — and assigns authorship to "the person by whom the arrangements necessary for the creation of the work are undertaken." The protection lasts 50 years from creation (rather than the standard life-plus-70).

In practice this means a UK user prompting an AI tool may have a colorable claim to copyright in the output, with themselves as author and a 50-year term — a position no other major jurisdiction matches. However:

- The UK government held consultations in 2024–2025 about reforming or repealing s. 9(3) precisely because of AI; its future is uncertain.

- The "originality" requirement in UK copyright (after the EU's Infopaq decision, which still influences UK case law) requires the author's "own intellectual creation," which AI outputs may struggle to satisfy. Courts haven't definitively resolved the tension.

European Union

EU copyright requires that a work be the "author's own intellectual creation" reflecting their "free and creative choices" (the standard from Infopaq, Painer, and other CJEU rulings). Pure AI outputs typically fail this test because the "creative choices" are made by the model, not the user.

Key EU points as of 2026:

- No copyright for purely AI-generated works in nearly all member states. Human-edited or human-arranged works can qualify based on the human contribution.

- The EU AI Act (in force since August 2024) imposes transparency obligations: providers of generative models must publish summaries of copyrighted training data, and AI-generated content (especially deepfakes) must be labeled. Transparency is not the same as ownership, but it interacts with it.

- Text and data mining exceptions (Articles 3 and 4 of the DSM Directive) allow training on lawfully accessible works unless the rightsholder opts out via machine-readable means. This affects what's legally inside many EU-trained models.

China

China is the most permissive major jurisdiction toward AI-generated copyright. In November 2023, the Beijing Internet Court ruled in Li v. Liu that an AI-generated image (created in Stable Diffusion) was a protected work, and that the human user — who designed the prompt, selected the model, and chose among outputs — was the author. The court emphasized the user's "intellectual investment" in the prompt design and selection process.

Subsequent Chinese rulings have largely followed the same logic. As of 2026, China is the most user-friendly major jurisdiction for prompt-based AI authorship — though as a first-instance court ruling, Li v. Liu isn't binding nationwide and Chinese copyright case law continues to develop.

Japan

Japan's Copyright Act protects works that are "creative expressions of thoughts or sentiments" (Article 2(1)(i)) — language traditionally read to require human creativity. The Japanese Cultural Affairs Agency has indicated that purely AI-generated works are not protected, while works with substantial human creative input can be.

Japan is also notable on the training side: Article 30-4 of its Copyright Act broadly permits use of copyrighted works for "non-enjoyment" purposes, which the government has interpreted to include AI training. This made Japan one of the most permissive training jurisdictions, though the rule has come under recent scrutiny.

Other notable jurisdictions

- Canada — Generally aligns with the US/EU human-authorship requirement; the Canadian government has held consultations but has not legislated specifically. Pure AI outputs are likely unprotected.

- Australia — Following Acohs v Ucorp and Telstra v Phone Directories, Australian copyright requires identifiable human authorship; AI-only outputs likely fail this test.

- India — Has flirted with both directions. The Copyright Office initially registered an AI-co-authored work (Suryast) and then issued a withdrawal notice; the legal status remains unsettled. Statutorily, India recognizes "computer-generated works" similarly to the UK.

- South Korea — Korean Copyright Commission has stated that AI-generated content without human creative input is not protected.

- Singapore — Likely follows the human-authorship line; has a text-and-data-mining exception (Section 244 of the Copyright Act 2021) similar to Japan's, focused on training.

- Hong Kong — Has a UK-style "computer-generated works" provision, but its application to modern generative AI hasn't been tested.

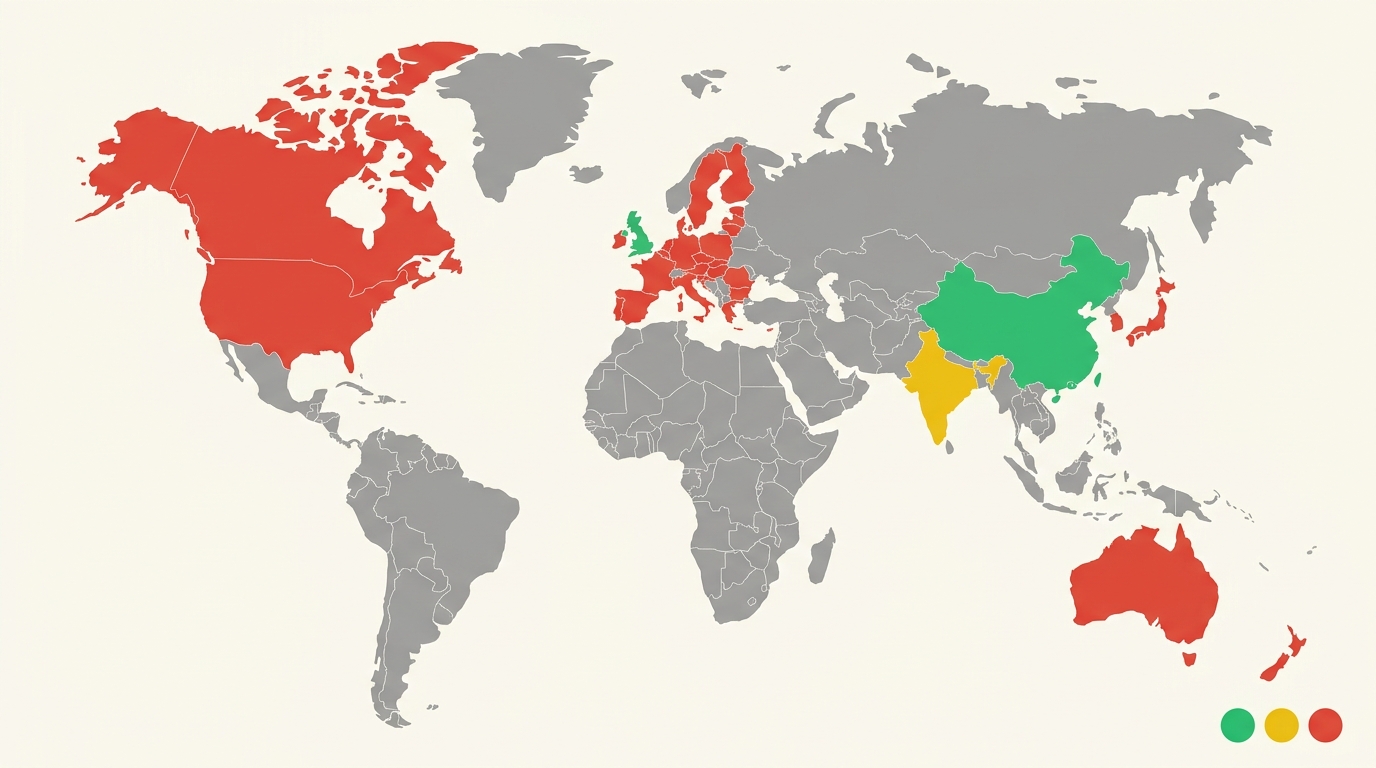

Side-by-side comparison

Copyright treatment of AI-generated content by jurisdiction (general position as of 2026)

| Jurisdiction | Pure AI output protected? | Who is the author? | Notable feature |

|---|---|---|---|

| United States | No (USCO + Thaler v. Perlmutter) | N/A — needs human author | Human-arranged compilations may qualify |

| United Kingdom | Likely yes, under s. 9(3) CDPA | The person who made the arrangements | 50-year term; reform under review |

| European Union | No — requires "intellectual creation" | N/A — needs human author | AI Act adds transparency duties |

| China | Yes, per Li v. Liu (2023) | The prompting user | Most user-friendly major jurisdiction |

| Japan | No for pure AI; yes if substantial human input | Human creator only | Permissive training rule (Art. 30-4) |

| Canada | Likely no | N/A — needs human author | Government still consulting |

| Australia | Likely no | N/A — needs human author | Strict identifiable-author requirement |

| India | Unsettled | Statute allows "computer-generated" works | Mixed registration history |

| South Korea | No | Human creator only | Clear administrative position |

| Hong Kong | Possibly yes | The arranging person (s. 11 CO) | UK-style provision, untested for AI |

Layer 2: what the platform's terms of service actually say

Even where copyright is murky or absent, most AI platforms grant you contractual rights to use the output — and those terms are often the most practical "ownership" question for day-to-day work. Typical patterns in 2026:

- "You own the output" language — common in OpenAI's ChatGPT/DALL·E terms, Anthropic's Claude, and many others. Read carefully: this typically means the platform assigns or grants any rights it has to you, but it does not (and cannot) grant copyright that doesn't exist under law in your jurisdiction.

- Commercial-use clauses — most paid plans permit commercial use. Some free plans restrict it. Some image platforms reserve the right to use your prompts and outputs for further model training unless you opt out.

- Indemnity clauses — a few enterprise tiers offer indemnification if a third party claims your output infringes their copyright (Microsoft, Google, OpenAI all offer some version for paying customers). This addresses the training-data risk, partially.

- Attribution and labeling — increasingly required. The EU AI Act mandates labeling of AI-generated media; some platforms automatically embed C2PA content credentials.

The practical takeaway: when copyright won't help you, your platform license probably will — but only against the platform itself. It does not stop a third party from copying an unprotected AI output once it's public.

Layer 3: training-data risk

A separate ownership question hangs over every AI output: was the model trained on copyrighted works without permission? In 2026 this is the subject of major pending lawsuits in the US (NYT v. OpenAI, Getty v. Stability AI, the consolidated authors-vs-OpenAI suits), the UK (Getty v. Stability AI ruled partly in 2024), and ongoing investigations in the EU under the AI Act's transparency requirements.

Most of these cases haven't reached final outcomes that bind downstream users — but a few practical implications already exist:

- Outputs that closely reproduce a training-set work (memorization) can infringe regardless of copyright in the AI output itself.

- Style imitation alone is not generally infringement, but commercial use of imagery that closely resembles a recognizable artist's work invites disputes.

- Enterprise indemnity programs (above) shift some of this risk to the platform.

Common scenarios

I generated an image for my blog. Can I use it commercially?

In most cases yes, under the platform's terms — but other people may also be able to copy it freely if it isn't protected by copyright in your jurisdiction. If exclusivity matters, consider editing the output substantially (your edits become protected human authorship) or commissioning original work.

I wrote a long article using AI to help. Do I own it?

Probably yes for the parts you authored or substantially edited. The question is what counts as "substantial." In the US, light editing is unlikely to meet the bar; significant rewriting, structural choices, and original analysis generally do.

I'm in the UK — does s. 9(3) cover my AI image?

You may have a colorable copyright claim for 50 years, but this hasn't been definitively tested in court for modern generative AI outputs, and reform is on the table. Don't rely on it for high-stakes commercial work without legal advice.

Someone copied an AI image I generated. Can I sue?

In the US, almost certainly not — there's nothing to enforce if no copyright exists. In China, possibly, under Li v. Liu's reasoning. In the UK, possibly under s. 9(3). Everywhere, you may also have separate claims (passing off, unfair competition, breach of platform terms) depending on the facts.

I sold an AI-generated NFT or print. Is the buyer's "ownership" real?

The buyer owns the physical object or token. Whether they own copyright depends on what you transferred and whether you had copyright to transfer in the first place. Get this in writing and don't overstate the rights you can pass on.

What creators should actually do

- Read the platform terms. They control more of your day-to-day rights than statute does. Look for ownership, commercial use, training opt-out, and indemnity clauses.

- Document your human contribution. If you edit, arrange, or significantly modify AI outputs, keep evidence (versioned files, prompt logs, edit history). It's the foundation of any future copyright claim.

- Don't overstate ownership in contracts. If you're licensing AI work to a client, describe what you're actually delivering — including whether copyright protection is uncertain.

- Label AI content where required. The EU AI Act and platform-level rules increasingly demand it. Failure to disclose can have its own legal consequences.

- For high-stakes commercial work, get legal advice. Especially for cross-border use, where you may be subject to multiple jurisdictions at once.

The bottom line

"Who owns AI-generated content?" doesn't have one answer in 2026 — it has at least three (copyright, contract, training risk) and they vary by country. The pragmatic position for most creators: assume your copyright in pure AI outputs is weak or non-existent in most major jurisdictions outside the UK and China; rely on the platform license for the right to use them; add meaningful human authorship if exclusivity matters; and watch this space, because the rules are still being written.